I have long admired Soraya Chemaly – I had interviewed the American feminist writer and activist for the fourth anniversary cover of eShe in 2020, the year of the pandemic, and continued to follow her writing ever since.

I learnt about technology scholar Safiya U. Noble a bit later, while doing my Master’s with a focus on digital media and social change. Her pathbreaking book Algorithms of Oppression: How Search Engines Reinforce Racism (2018) was prescribed as part of our coursework.

So it was with great fan-girl enthusiasm that I attended a recent webinar “Uncovering the Gaze: The Female Body, Nipple Politics and Digital Culture” that brought the two intellectuals together on the same platform.

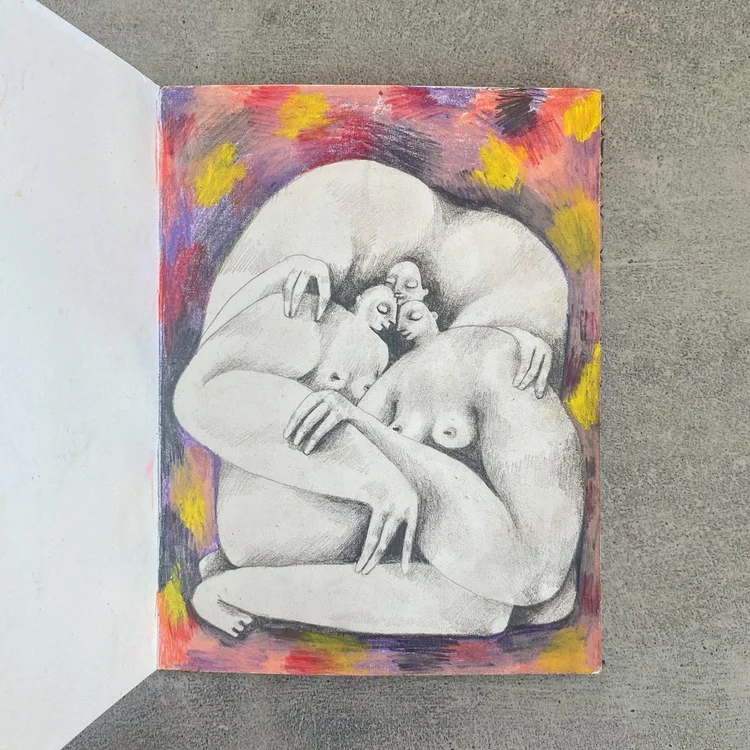

Organised by the National Coalition Against Censorship, the webinar was part of a collaboration between two people’s movements – Don’t Delete Art, a project defending artistic freedom online, and Free the Nipple, a global body-equality movement founded by activist and filmmaker Lina Esco over 13 years ago.

This panel discussion was part of a series of live talks with scholars and activists, besides artist spotlights, to raise awareness about how female bodies are interpreted, regulated and circulated online through content moderation systems, algorithms and AI technologies.

The core issue was how online social platforms like Meta prohibit female-appearing nipples and breasts while allowing male bare-chested images – even if the image depicts art, protest, indigenous cultures or medical information.

The absurdity of the female nipple ban

At a time when deepfakes, AI-generated porn and non-consensual intimate images are blatantly created and distributed using real women’s faces and bodies without consent – generating billions of dollars in profit for technology firms and the porn industry – it is absurd that self-representations of bodies by women and LGBTQ individuals for the sake of art, protest or education are banned by the same tech capitalist systems.

As the panelists explained, the regulation of women’s bodies serves as a primary way of maintaining intersecting systems of sexist, racist, xenophobic and homophobic hierarchies. When women claim their nudity for themselves, it becomes an automatic ‘transgression’ within these male supremacist frameworks.

The conversation traced how these platform policies emerged from early manual content-moderation practices (2010–2012) that reproduced traditional cultural hierarchies, and how these biases are now encoded into algorithmic systems and AI datasets, making them increasingly difficult to challenge.

Chemaly, author of books such as Rage Becomes Her (2018) and All We Want Is Everything (2025), explained: “These platforms believed that it was their role as ‘neutral platforms’ – and I use that term very carefully – just to ‘reflect culture’. Which is really an impossible and kind of ridiculous statement when you are moderating content from literally all over the world. It means that you have to create some baseline, universalizing structure to do that, with very few people and resources dedicated to that effort. So, what they did, by default, was reproduce white patriarchal colonial structures of knowledge, information, morals and definitions of what constituted acceptable and unacceptable content, and… in that traditional framework, women’s nudity was highly regulated.”

Early platform policies even prohibited breastfeeding images, mastectomy pictures, health information showing women’s bodies, and LGBTQ community content that might need to represent bodies – all rolled up under the label of “nudity”.

The activism to change these policies focused on the fact that nudity is used politically and artistically, particularly by women whose oppression is embodied. Chemaly shared a particularly stark example involving trans activists documenting their transition online. “One day their nipples went from ‘male-coded’ to ‘female-coded’ and their entire accounts were wiped out,” she said.

She also mentioned the ‘male nipple template’ art project by Micol Hebron, which vividly illustrated the ridiculousness of these policies by creating a sticker of a male nipple that women could paste over their own nipples, since theoretically a male nipple was acceptable. This highlighted the arbitrary and discriminatory nature of the rules.

“In effect,” Chemaly stated, “when you ban images of the [female] nipple, you’re conceding to the conflation of all women’s nudity with pornification and sexualization.”

Another example Chemaly shared involved Brazil’s Ministry of Indigenous Rights publishing a historical photo (circa 1910) of an Indigenous woman topless in traditional clothing, which Facebook removed – they couldn’t allow nipples to be shown even historically by Indigenous people representing themselves.

In 2023, the Facebook Oversight Board finally concluded that nipple censorship represented gender discrimination and gender identity discrimination, leading to some rule changes.

Embedding bias in algorithmic systems

As Noble explained, the implicit biases of culture became explicitly codified through human content moderation, which are now disappearing into the architecture of algorithmic systems. “The nipple was symbolic of these tensions. Who was deciding? Why were they making those decisions?” posited the renowned professor, who is David O. Sears Presidential Endowed Chair of Social Sciences at University of California, Los Angeles.

Noble also noted that when an AI system learns from data where 90 percent of representations of Black women are pornographic, it will categorize any image of a Black woman through that lens. It can also filter out self-representations of naked bodies as “solicitation for sex” by default, even if the image is artistic, political or simply a person existing.

This is a result of human biases encoded in technology. Observations of content moderation call centers in Europe showed moderators “actively keeping racist and sexist material under the auspices of free speech or First Amendment protections”, while removing content that was “clearly political critique or response to racism and sexism”, Noble said.

Even then, the industry’s narrative positions technology as “neutral” tools, with failures blamed on users rather than acknowledging larger issues like content moderation, algorithmic discrimination, user profiling, and selling attention to advertisers.

The “neutrality” claim serves as a shield against accountability, Chemaly further noted. By saying they’re merely reflecting existing cultural norms, platforms avoid taking responsibility for how their policies actively construct what becomes visible and acceptable, she averred.

This is particularly insidious because it makes these biases appear natural and inevitable rather than choices made by specific people with specific worldviews.

AI failures and misrepresentation

Noble also brought up the bias inherent in AI chatbots generated by large language models (LLMs), which feature anthropomorphized, human-like interfaces often with female voices – this plays a role in socializing people around women’s service to others.

The tech industry has also sexualized AI chatbots, turning them into companions or girlfriends, and embedding them in humanoid robots to create what amounts to “sex slaves that can’t say no and have no agency”, Noble shared, adding that many technological developments – from credit card processing to video processing – were driven by the porn industry and men’s sexual desires.

These LLM products have “stolen” artifacts including books, articles, interviews and subreddits to train large models allegedly smarter than humans, though nothing could be further from the truth. In fact, Noble explained, the narrative that “technology is smarter than human beings” emerged at the dawn of the Civil Rights Movement in the 1950s–60s, with the aim of undermining multiracial, multi-gendered democratic participation.

Studies show these LLMs are grossly misrepresentative and stereotypical. One study showed a program unable to render a picture of a Black doctor helping White children, Noble shared. These systems could only represent Africa by putting a giraffe in the image or placing people in the Sahara, unable to fathom global cosmopolitan cities on the continent.

Meanwhile, “LLMs easily render women according to hegemonic white supremacist beauty standards and refuse to create bodies that aren’t constrained by narrow pornified logics,” Noble said.

Why tech leaders embrace destructive ideologies

The speakers also brought up the issue of platforms prioritizing advertiser concerns over user needs. Since the porn industry pays platforms directly and advertisers want to avoid association with content that can be “controversial” (like women’s bodies in non-sexualized contexts!), the result is that sexualized content is monetized while feminist, artistic or political content is suppressed.

Noble and Chemaly also dwelt on “The TESCREAL Bundle”, a paper by Timnit Gebru and Emile P. Torres that explores ideologies such as transhumanism, singularitarianism and longtermism that are now mainstream among tech industry leaders. These worldviews share a eugenicist origin story and belief that some lives are more valuable than others, that most people are expendable, and that democracy is unnecessary or harmful.

When tech leaders like Eric Schmidt say we should “go all in on artificial general intelligence” despite the climate crisis, or when “they build bunkers in New Zealand”, they’re expressing a genuine belief that technological advancement for a select few is more important than collective survival, Noble said. She recommended the new investigative documentary Ghost in the Machine (2026) for tracing these connections explicitly.

This helps us understand why platforms make seemingly irrational decisions that harm users – the decisions aren’t irrational from the perspective of industry leaders who explicitly reject democratic values and believe in hierarchy based on race, gender and class.

Or to put it in the words of feminist theorist bell hooks, it’s the white supremacist capitalist patriarchy. The unfree nipple is just one symptom of a larger malaise.

Discover more from eShe

Subscribe to get the latest posts sent to your email.

0 comments on “Soraya Chemaly and Safiya Noble on ‘nipple politics’ and algorithms of gender injustice”